The Circuit Breaker pattern is a resilience strategy that allows your application to avoid sending requests to a failing service. When a downstream service starts failing, the Circuit Breaker detects the failure, pauses further requests for a configured period of time, and then checks whether the service has recovered. This protects both your application and the downstream service, as without it, your app would keep sending requests to a failing service, wasting resources, adding unnecessary load, and making it even harder for the failing service to recover. In this article, I explain how the Circuit Breaker works and demonstrate with practical examples how to use it in a .NET 10 Web API using Microsoft’s HTTP resilience package, which is powered by Polly.

This is the third article of the series "Building a Resilient .NET Web API". Below you can find the previous articles of this series:

- Part 1 - Introduction to Resilience: https://henriquesd.com/articles/building-a-resilient-net-web-api-part-1

- Part 2 - Retry Strategies: https://henriquesd.com/articles/building-a-resilient-net-web-api-part-2

What is the Circuit Breaker Pattern

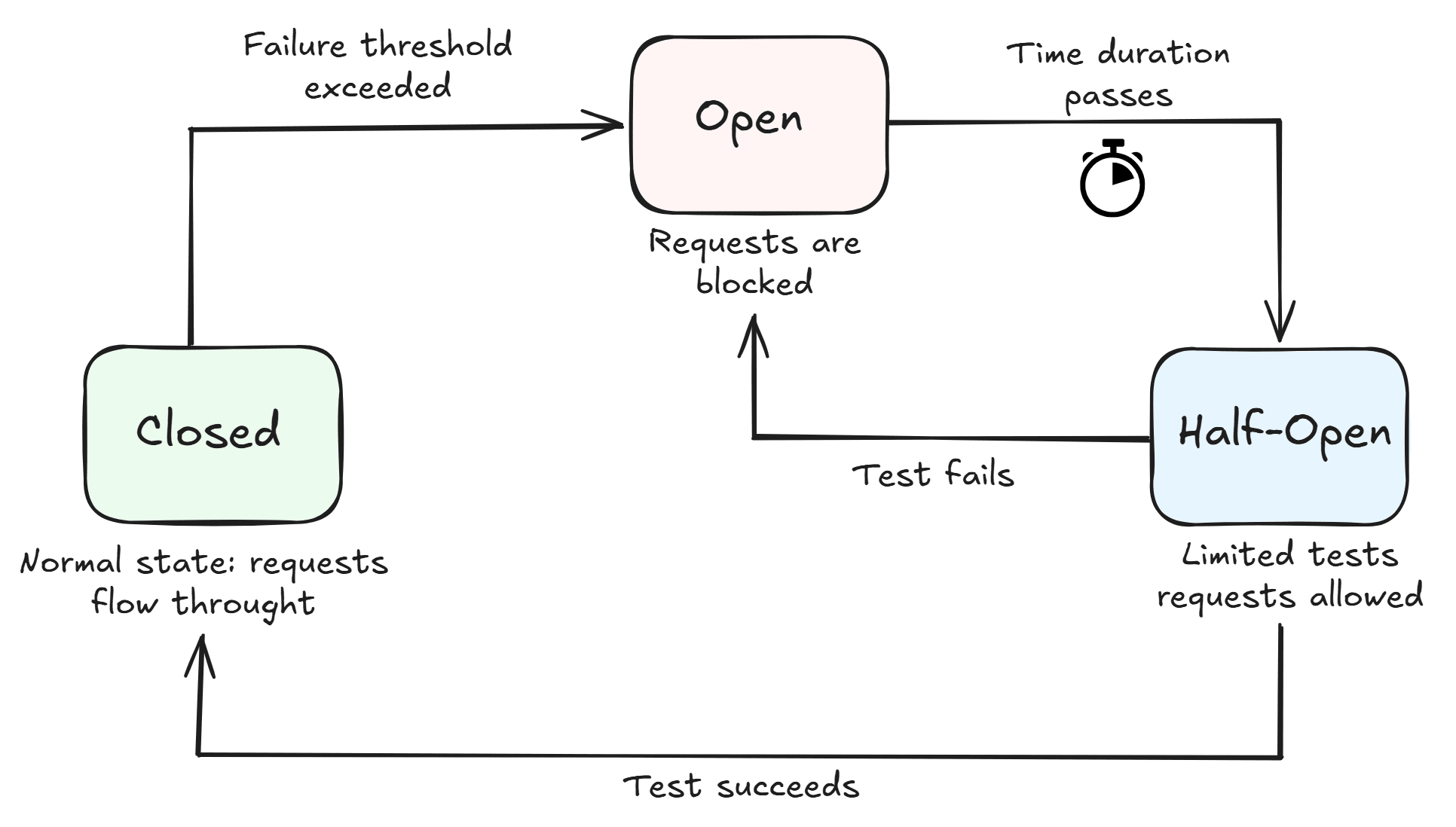

The Circuit Breaker pattern is one of the most important resilience patterns in distributed systems, and it is inspired by electrical circuit breakers (like the ones in your home electrical panel): when a fault is detected, the circuit "opens" to prevent further damage. In software, the circuit breaker monitors the success and failure rate of requests to a downstream service and transitions between three states:

- Closed: This is the normal state, when everything works normally; requests go through to the downstream service, and the circuit breaker monitors the failure rate. If the failure rate exceeds the configured threshold within a certain time window, the circuit transitions to Open.

- Open: Requests are blocked right away (without calling the downstream service). This gives the downstream failing service time to recover. After a set of time, the circuit transitions to Half-Open.

- Half-Open: The circuit breaker allows a limited number of test requests through to the downstream service. If the test requests succeed, the circuit transitions back to Closed. If they fail, the circuit returns to Open.

In the Diagram below, you can see an example of the flow:

Real-world use case examples

For example, think of a Checkout flow that integrates with a third-party payment provider. If that provider starts timing out or returning errors, and your application continues to send requests, it can increase latency for users, overload the failing service even further, and potentially create duplicate or inconsistent transactions. With a Circuit Breaker in place, once a failure threshold is reached, the requests are temporarily stopped. The application can then fail fast or switch to a fallback (e.g., “Try again later”), keeping the user experience predictable instead of degrading unpredictably.

Another example, consider a scenario where your application depends on a third-party API that enforces rate limits or occasionally becomes unavailable. If your application keeps retrying aggressively during these periods, it can worsen the rate limiting and waste resources on requests that are likely to fail. With a Circuit Breaker in place, repeated failures such as HTTP 429 (Too Many Requests) or 503 (Service Unavailable) are detected, and outgoing calls are paused for a cooldown period. After that, the system gradually allows requests again to check if the service has recovered, preventing unnecessary load and improving overall stability.

The UnreliableWeatherApi

To demonstrate the Circuit Breaker, I created a new endpoint on the UnreliableWeatherApi, which simulates a service going down and recovering in cycles. You will see the circuit open during failures and close again when the service recovers. This is the WeatherController with the new unstable endpoint:

[ApiController]

[Route("api/[controller]")]

public class WeatherController : ControllerBase

{

private static readonly Stopwatch _unstableTimer = Stopwatch.StartNew();

[HttpGet("unstable")]

public IActionResult GetUnstable()

{

var secondsInCycle = _unstableTimer.Elapsed.TotalSeconds % 20;

if (secondsInCycle < 12)

{

return StatusCode(500, new { Message = "Service is down" });

}

return Ok(new { Temperature = 18, Summary = "Windy" });

}

}- The

GetUnstablemethod creates a repeating 20-second cycle, using elapsed time modulo 20: the first 12 seconds return a500error to simulate downtime, and the remaining 8 seconds return a successful response with weather data. - On line 5, a static

Stopwatchis initialised when the application launches, used to simulate a service going down and recovering. - On line 10,

_unstableTimer.Elapsed.TotalSeconds % 20calculates the current position within the cycle. - On lines 12 to 15, during the first 12 seconds of each cycle, the endpoint returns a

500error, simulating a service outage. - On line 17, during the remaining 8 seconds, it returns a successful response with weather data.

Configuring the Circuit Breaker

Polly provides the HttpCircuitBreakerStrategyOptions class for configuring the circuit breaker with HttpClient. The key configuration properties are:

FailureRatio: The percentage of failed requests that triggers the circuit to open. For example, a value of 0.5 means the circuit opens when 50% or more of requests fail within the sampling window.SamplingDuration: The time window during which failures are measured. For example, 10 seconds means Polly evaluates the failure ratio over the last 10 seconds of requests.MinimumThroughput: The minimum number of requests that must occur within the sampling window before the circuit breaker can open. This prevents the circuit from opening on the very first failed request or just a few early ones.BreakDuration: How long the circuit stays open before transitioning to half-open to try again. During this period, all requests are rejected immediately, giving the downstream service a chance to recover.

Adding the Circuit Breaker Extension Method

Following the same extension method pattern from the previous article, let's add the circuit breaker HttpClient registration to the HttpClientResilienceExtensions class:

public static IServiceCollection AddCircuitBreakerClient(this IServiceCollection services, Uri baseAddress)

{

services.AddHttpClient("UnreliableWeatherApi-CircuitBreaker", client =>

{

client.BaseAddress = baseAddress;

})

.AddResilienceHandler("circuit-breaker", pipelineBuilder =>

{

pipelineBuilder.AddCircuitBreaker(new HttpCircuitBreakerStrategyOptions

{

FailureRatio = 0.5,

SamplingDuration = TimeSpan.FromSeconds(10),

MinimumThroughput = 3,

BreakDuration = TimeSpan.FromSeconds(5),

OnOpened = args =>

{

Console.WriteLine($"Circuit OPENED. Break duration: {args.BreakDuration.TotalSeconds:F1}s");

return default;

},

OnClosed = args =>

{

Console.WriteLine("Circuit CLOSED. Requests flowing normally.");

return default;

},

OnHalfOpened = args =>

{

Console.WriteLine("Circuit HALF-OPENED. Testing with next request...");

return default;

}

});

});

return services;

}- On line 9,

AddCircuitBreakeris called withHttpCircuitBreakerStrategyOptions. This is the HTTP-specific version of the circuit breaker options, which already knows to treat HTTP 5xx responses as failures. - On line 11,

FailureRatiois set to0.5, meaning the circuit opens when 50% or more of requests fail. - On line 12,

SamplingDurationis set to 10 seconds, meaning Polly evaluates failures over the last 10 seconds of requests. - On line 13,

MinimumThroughputis set to 3, meaning that at least 3 requests must occur within the sampling window before the circuit breaker can open. This prevents the circuit from opening on just one or two early failures. - On line 14,

BreakDurationis set to 5 seconds. After the circuit opens, it stays open for 5 seconds before trying again in half-open mode. - On lines 15 to 19, the

OnOpenedcallback logs when the circuit opens, including the break duration. - On lines 20 to 24, the

OnClosedcallback logs when the circuit transitions back to the closed state. - On lines 25 to 29, the

OnHalfOpenedcallback logs when the circuit transitions to the half-open state.

HttpCircuitBreakerStrategyOptions vs CircuitBreakerStrategyOptions

Polly provides two options classes for the circuit breaker:

CircuitBreakerStrategyOptions<T>: The generic version where you must defineShouldHandleto specify which outcomes count as failures.HttpCircuitBreakerStrategyOptions: The HTTP-specific version provided byMicrosoft.Extensions.Http.Resilience. It extendsCircuitBreakerStrategyOptions<HttpResponseMessage>and comes pre-configured to treat HTTP 5xx server errors andHttpRequestExceptionas failures.

By using HttpCircuitBreakerStrategyOptions, you do not need to configure ShouldHandle manually. Polly already knows which HTTP responses indicate a failure. For most scenarios with HttpClient, this is the recommended choice.

Registering the Circuit Breaker in Program.cs

Once the extension method is created, adding the circuit breaker client in Program.cs is straightforward. You just chain it along with your other HTTP clients:

using HttpResilienceDemo.ResilientApi.Extensions;

var builder = WebApplication.CreateBuilder(args);

builder.Services.AddControllers();

var unreliableWeatherApiBaseAddress = new Uri(builder.Configuration["UnreliableWeatherApi:BaseAddress"]!);

builder.Services

.AddConstantRetryClient(unreliableWeatherApiBaseAddress)

.AddLinearRetryClient(unreliableWeatherApiBaseAddress)

.AddExponentialRetryClient(unreliableWeatherApiBaseAddress)

.AddSelectiveRetryClient(unreliableWeatherApiBaseAddress)

.AddRetryAfterClient(unreliableWeatherApiBaseAddress)

.AddCircuitBreakerClient(unreliableWeatherApiBaseAddress);

var app = builder.Build();

app.UseHttpsRedirection();

app.MapControllers();

app.Run();- On line 15,

AddCircuitBreakerClientis chained to the existing resilience registrations (which were created in the previous article). This ensures yourHttpClientnow has the circuit breaker behaviour applied, and now it will automatically stop sending requests when the downstream service is failing and resume once the service recovers.

The Circuit Breaker Controller

Now let's take a look at the GetWithCircuitBreaker method on the CircuitBreakerController that calls the UnreliableWeatherApi's unstable endpoint using the circuit breaker client:

[HttpGet("weather")]

public async Task<IActionResult> GetWithCircuitBreaker()

{

try

{

var client = _httpClientFactory.CreateClient("UnreliableWeatherApi-CircuitBreaker");

var response = await client.GetAsync("/api/weather/unstable");

if (response.IsSuccessStatusCode)

{

var content = await response.Content.ReadAsStringAsync();

return Content(content, "application/json");

}

return StatusCode((int)response.StatusCode);

}

catch (BrokenCircuitException)

{

return StatusCode(503, new { Message = "Circuit breaker is open. Service is temporarily unavailable." });

}

}- The method is wrapped in a

try-catchblock. This is different from the retry controller endpoints, because the circuit breaker can throw an exception when the circuit is open. - On line 6, the

UnreliableWeatherApi-CircuitBreakernamed client is created. This client has the circuit breaker resilience handler attached and on line 7, the request is sent to the/api/weather/unstableendpoint. - On lines 9 to 13, if the response is successful, the content is returned as JSON; otherwise, the downstream status code is forwarded.

- On lines 17 to 20, the

BrokenCircuitExceptionis caught. When the circuit is open, Polly does not send the request to the downstream service; it immediately throws this exception. The controller returns a 503 status code with a message explaining that the circuit breaker is open.

One key difference from the retry pattern is how responses are handled. With retries, the pipeline always gives you an HttpResponseMessage (either from the last retry attempt or the original request). But with a circuit breaker, when the circuit is open, there is no HttpResponseMessage (no response at all), instead, Polly throws a BrokenCircuitException, which you need to catch and handle explicitly.

Observing the Circuit States

Let’s see the circuit breaker in action by making repeated requests to the /api/circuit-breaker/weather endpoint while the UnreliableWeatherApi is in its failure phase. Here is what happens step by step:

1. Initial requests: Circuit is Closed:

At first, the circuit is closed, and requests go through normally. The first few requests fail with 500 errors from the UnreliableWeatherApi. Polly monitors these failures over the 10-second sampling window:

Request 1 → 500 (failure counted)

Request 2 → 500 (failure counted)

Request 3 → 500 (failure ratio = 100%, minimum throughput of 3 reached)

Circuit OPENED. Break duration: 5.0sAfter three failed requests, the failure ratio reaches 100% (above the 50% threshold), and the minimum throughput of 3 has been reached. At this point, the circuit opens, preventing further requests from hitting the downstream service.

2. Circuit is Open: Requests are rejected:

For the next 5 seconds (the break duration), all requests are immediately rejected without calling the UnreliableWeatherApi (they don’t even reach the UnreliableWeatherApi):

Request 4 → 503 "Circuit breaker is open. Service is temporarily unavailable."

Request 5 → 503 "Circuit breaker is open. Service is temporarily unavailable."During this phase, the UnreliableWeatherApi receives no traffic at all. This gives the service time to recover.

3. Circuit transitions to Half-Open:

After the 5-second break duration ends, the circuit transitions to half-open state:

Circuit HALF-OPENED. Testing with next request...4. Test request in Half-Open state:

The next request is allowed through as a test:

- If the UnreliableWeatherApi has recovered (during the 8-second success window), the test request succeeds, and the circuit transitions back to Closed:

Request 6 → 200 OK

Circuit CLOSED. Requests flowing normally.- If the UnreliableWeatherApi is still failing, the test request fails, and the circuit returns to Open for another break duration:

Request 6 → 500 (test failed)

Circuit OPENED. Break duration: 5.0sRunning the Demo

To run the demo, start both applications. First, start the UnreliableWeatherApi:

dotnet run --project src/HttpResilienceDemo.UnreliableWeatherApiThen, in a separate terminal, start the ResilientApi:

dotnet run --project src/HttpResilienceDemo.ResilientApiNow make repeated requests to the circuit breaker endpoint. You can use a loop in PowerShell to send multiple requests:

for ($i = 1; $i -le 20; $i++) {

Write-Host "Request $i :"

try {

Invoke-RestMethod https://localhost:5002/api/circuit-breaker/weather

} catch {

Write-Host $_.Exception.Message

}

Start-Sleep -Seconds 1

}Or with curl in bash:

for i in $(seq 1 20); do

echo "Request $i:"

curl -s https://localhost:5002/api/circuit-breaker/weather

echo

sleep 1

doneWatch the ResilientApi console output while making repeated requests to see the circuit state transitions in action. You will notice the circuit open after the initial failures, reject requests during the break duration, transition to half-open, and finally transition to close when the UnreliableWeatherApi recovers.

Note that the UnreliableWeatherApi's unstable endpoint follows a 20-second cycle: 12 seconds of failure followed by 8 seconds of success. Depending on when you start sending requests, the timing of the circuit transitions may vary slightly.

Conclusion

In this article, I explained the Circuit Breaker pattern and demonstrated how to configure it with Polly v8 using HttpCircuitBreakerStrategyOptions. The circuit breaker protects your application from wasting resources on a failing service while giving the downstream service time to recover.

The three states: Closed, Open, and Half-Open, work together to detect failures, stop traffic, and gradually resume requests when the service recovers. In most real-world scenarios, it’s best to combine the circuit breaker with a retry strategy (covered in the previous article).

In the next article, I will cover the Fallback and Timeout strategies with Polly.

This is the link for the project in GitHub: https://github.com/henriquesd/HttpResilienceDemo

If you like this demo, I kindly ask you to give a ⭐ in the repository.

Thanks for reading!

References