Prompt engineering is the process of designing and refining inputs to guide an AI model’s behaviour and responses, to maximise the likelihood of the desired outcome. Different prompting techniques can be applied depending on the problem and the type of result you want to achieve. In this article, I present the Chain-of-Thought (CoT), Skeleton-of-Thought (SoT) and Tree-of-Thought (ToT) techniques.

Chain-of-Thought (CoT)

Chain of Thought (CoT) is a prompt engineering technique that instructs a Large Language Model (LLM) to explain its reasoning process step-by-step, instead of jumping directly from a question to a final answer. This approach allows the model to handle complex tasks involving logic, arithmetic, or multi-stage planning more accurately.

A benefit of this method is that by demonstrating its thought process, the model uses its previous answer as context for the next answer, in the same prompt. To use CoT in your prompt, you can explicitly mention the model to break the task into steps and explain the result for each step. For example:

Explain the steps necessary to migrate an e-commerce application

from a monolith to a microservice architecture.

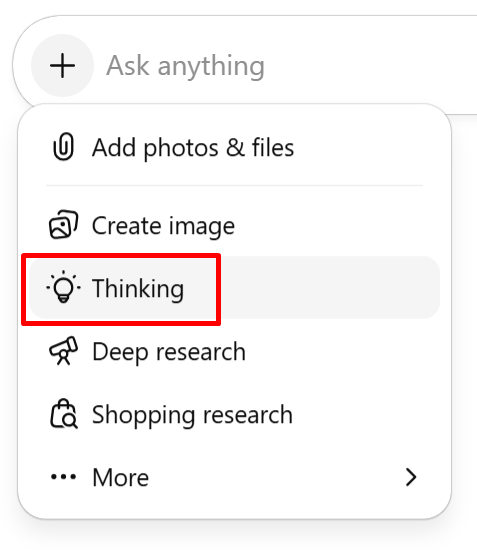

Break the task into steps, and output the result of each step as you

perform it.CoT method is already incorporated in many AIs. On ChatGPT, for example, you can select this by choosing the option “Thinking”:

You can also define explicit stopping instructions for the model (guardrails) to prevent infinite loops or excessive, unnecessary reasoning. For example:

Stop once you are confident that the answer is correctYou can also combine the In-Context Learning (ICL) to CoT, and define a role/persona, goal and output format in your prompt (to know more about ICT, check my article by clicking here).

Advantages of CoT

- Enhanced Complex Problem Solving: Significant improvement in the model’s ability to solve complex problems by breaking them into manageable parts.

- Explicit Reasoning: Allows the model to demonstrate its thought process step-by-step.

- Transparency and Auditability: Makes the model’s decision-making process visible, facilitating the verification of the logic used.

Limitations of CoT

- Longer Outputs: Generates more text, which can be costly for prompts with token limits or increased latency.

- Risk of Noise: Can introduce errors or “hallucinations” if the model generates an incorrect chain of logic.

- Model Requirements: Requires a model sufficiently trained to understand and apply step-by-step reasoning with high quality.

- Unnecessary Length: Without specific stopping criteria/guardrails, the model may unnecessarily prolong the reasoning process.

Structural Delimitations

Models like GPT and Claude, work quite well when the prompt uses explicit delimitators. A technique used by Anthropic, is to use XML tags to split the thinking from the final answer. This can be useful when you need to organise the communication between humans and LLMs, as this can improve the readability, can simplify the extraction of the ouput part that you need, and it also makes it easier to audit the prompt. These are some examples of tags:

<context>: Used to provide background information, reference documents, or the "setting" for the task. It tells the AI what it needs to know before it starts working.<thought>: Often used in system prompts to encourage the model to process ideas internally before speaking. It helps the model "think out loud" in a hidden space.<reasoning>: Similar to thought, but specifically focused on the logic used to reach a conclusion. This is great for debugging why an AI gave a specific answer.<step>: Used to break down a complex task into a sequence. You might ask the AI to wrap each part of its process in these tags to ensure it doesn't skip any logic.<answer>: Used to wrap the final output requested by the user. This makes it very easy for a developer to programmatically "grab" the result from the AI's response.<final_decision>: Used generally when the AI have multiple options being analysed and uses this tag to commit to one specific choice at the end.

For demonstration purposes, let's write a prompt using some of these tags:

You are a Lead Software Architect designing a Scalable Subscription Billing

Engine for a global SaaS platform that handles recurring payments and

automated retries for failed transactions.

Document your step-by-step reasoning between <thought> tags,

using <step> tags within that section to break down your logic by specific

milestones, and provide your final architectural solution and

database schema recommendations between <answer> tags, focusing on how you

ensure 'exactly-once' processing for monthly billings.By using these XML tags on the prompt, we transform a simple text box into a structured execution environment.

Skeleton-of-Thought (SoT)

Skeleton of Thought (SoT) is a strategy where we define a “skeleton” (like a “blueprint”) for the LLM to use in the answer. This method is useful when you need to control the output and the format of an answer. For example:

You are an experienced software engineer.

Your task is to summarise the advantages and disadvantages of a microservice

architecture.

For that, structure your answer with the following topics:

- Context of the problem

- Advantages and disadvantages

- Technical conclusionSoT can be useful for creating documentation, templates, summaries, splitting the answer into topics, etc.

Tree-of-Thought (ToT)

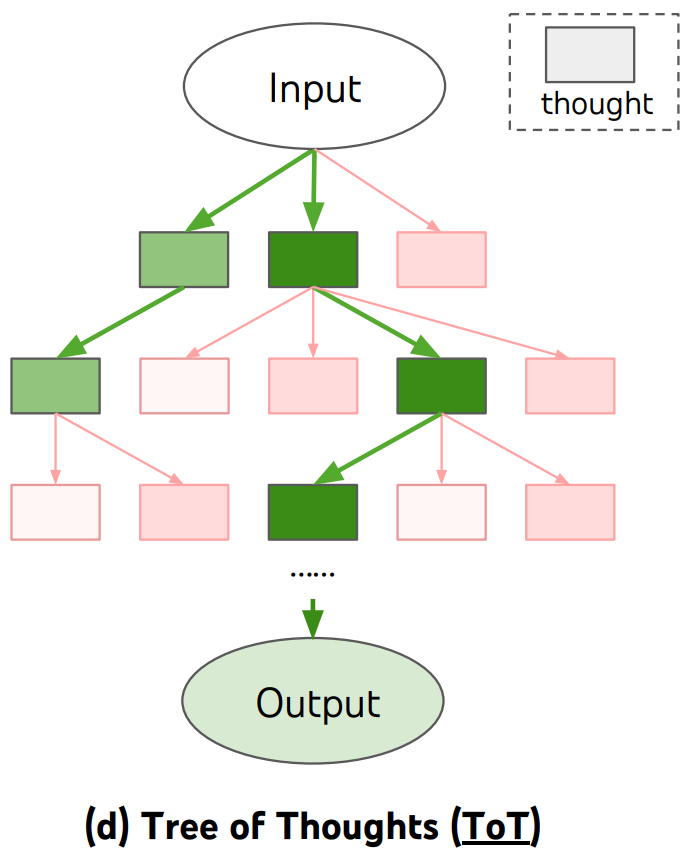

Tree of Thought (ToT) is a prompt technique that extends the traditional Chain of Thought (CoT) technique. While CoT follows a linear path of reasoning, ToT allows LLMs to explore multiple parallel or alternative reasoning paths simultaneously. This method is very useful when the task has multiple possible solutions.

Think of it as a mental map where the model does not just jump to the first conclusion it finds. Instead, it “branches out” different ideas, evaluates the viability of each branch, and can even backtrack if a particular path leads to a dead end. This mimics human deliberate problem-solving, where we weigh various strategies before committing to a final decision.

The illustration below from Arxiv, demonstrates how the ToT flow works:

In a nutshell, once the model receives the input, it creates multiple branches and can analyse each of them, and filter for the best answer/solution. A very nice thing about this method, is that it can bring solutions that the user is not aware of, and the model can evaluate all of them and present the best answer, based on the requirements informed on the prompt. For example:

You are an experienced software engineer in distributed systems architecture.

A client wants to split their monolith into microservices.

Generate multiple reasoning paths for performing this migration efficiently.

For each path, indicate advantages and disadvantages regarding

maintainability, scalability, and operational cost.

At the end, recommend the most suitable approach.You can also specify multiple personas to evaluate the problem, and make them analyse internally until they come up with the best conclusion. For example:

Three different experts are answering this question: a senior software

engineer, a senior cloud engineer, a senior software architect.

All experts make a decision, then share it with the group.

The experts need to consider each answer and evaluate which is the best

one for the specified scenario.

They have to come to a conclusion on which all of them agree.

The question is: A global e-commerce platform needs to ensure that a

customer in New York and a customer in Tokyo do not buy the "last" item in

stock at the exact same millisecond. How is the best way to achive this?Benefits of ToT

- Complex Problem Solving: Excels at tasks requiring deep planning.

- Error Correction: Because it evaluates each “thought” or branch, the model can identify mistakes early and discard faulty logic before it reaches the final output.

- Comparison of Strategies: It allows the model to look at a problem from multiple angles (e.g., “Strategy A” vs. “Strategy B”) and choose the one with the highest success rate.

When using ToT, we can also work with some guardrails that will keep the model’s reasoning focused and efficient. For that, we can:

- Control the branch expansion, by limiting the depth and number of alternatives. For example, using the previous prompt, we could modify it a little bit to match the following:

Present exactly three distinct strategic paths for performing this migration.

For each path, develop two levels of sub-steps2. Use explicit decision criteria; rather than leaving the “best” choice to the model’s intuition, you can guide it using specific parameters like the <lowest cost> or the <highest reliability>, etc. Using the prompt from the previous topic, we could modify it and include:

Evaluate these paths using the following priority order:

1. Operational Cost (must be minimized),

2. Maintainability, and

3. Scalability.3. Use iterative re-evaluation, by asking the AI to review its choices once all paths have been explored, this way it can correct early logic errors if a later branch proves to be superior, mimicking a true self-correcting human thought process. Using the previous prompt again, we could also modify to include:

After exploring all three paths, look back at your initial assumptions.

If any path presents a hidden risk in operational cost that you did not

initially mention, revise your analysis.Now, let’s combine all these guardrails for the ToT and implement them into the previous prompt:

You are an experienced software engineer in distributed systems architecture.

A client wants to split their monolith into microservices.

Present exactly three distinct strategic paths for performing this migration.

For each path, develop two levels of sub-steps.

Evaluate these paths using the following priority order:

1. Operational Cost (must be minimized),

2. Maintainability, and

3. Scalability.

After exploring all three paths, look back at your initial assumptions.

If any path presents a hidden risk in operational cost that you did not

initially mention, revise your analysis.By combining these guardrails, we transform the LLM from a simple text generator into a deliberate problem-solver. This structured prompt does not just ask for a solution, but it enforces a logical process of exploration, evaluation, and self-correction.

Conclusion

Techniques like Chain-of-Thought, Skeleton-of-Thought, and Tree-of-Thought, allow us to get better results when working with Artificial Intelligence. Using these techniques, we are no longer just “asking questions” to AI, instead, we allow models to reason, plan, and self-correct, getting closer to a collaborative problem-solving partner. Note that these techniques can be used in isolation or can also be combined; for example, we can use CoT + SoT + ToT in a single prompt to create high-precision prompts.

In the next article, I’m going to present other prompt engineering techniques like Self Consistency, Direction Stimulus and ReAct.

References

Prompt Engineering — Microsoft

Chain of thought prompting — Microsoft

Tree of Thoughts (ToT) — Prompt Engineering Guide

Skeleton-of-Thought: Prompting LLMs for Efficient Parallel Generation — Arxiv

Chain-of-Thought Prompting Elicits Reasoning in Large Language Models — Arxiv

Tree of Thoughts: Deliberate Problem Solving with Large Language Models — Arxiv