Prompt engineering involves crafting the instructions you give to an AI to guide its behaviour and maximise the quality of the results. For that, different techniques can be used. In this article, I present the Self-Consistency, Direct Stimulus Prompting (DST), and ReAct (Reasoning + Acting) techniques.

Self-Consistency

Self-Consistency technique uses Chain-of-Thought, but the task is executed multiple times (generally from 5 up to 10 times). The outputs are then compared, and the final output is determined by a majority vote or a consistency metric. The idea behind this is that while the model may make errors in a specific reasoning chain, the most reliable answers tend to converge across multiple executions. When executed multiple times, the answers are compared to identify those that are consistent, which the model then selects as the final result.

The Self-Consistency technique is a good option in scenarios where there is no single “correct” answer, but rather a “most logical” one that emerges through repetition. Here are the primary practical applications:

- Cloud & Infrastructure Estimates: When calculating cost and capacity (e.g., estimating storage or cloud spend). Since these involve multiple variables, running the prompt multiple times helps catch outlier logic and ensures the math is sound.

- Environment Sizing and Planning: Ensures hardware recommendations remain stable across different technical perspectives.

- Numerical & Algorithmic Validation: It is ideal for validating numerical results or algorithmic predictions. If the model reaches the same “result” through different calculation steps, your confidence in the output increases.

- Technical Hypothesis Verification: To test technical hypotheses against multiple criteria. It ensures that a proposed solution holds up under different architectural perspectives.

- Architectural Logic Comparisons: It helps in comparing internal logic during architectural reviews or tests, ensuring that the chosen design pattern is consistently identified as the optimal choice across multiple iterations.

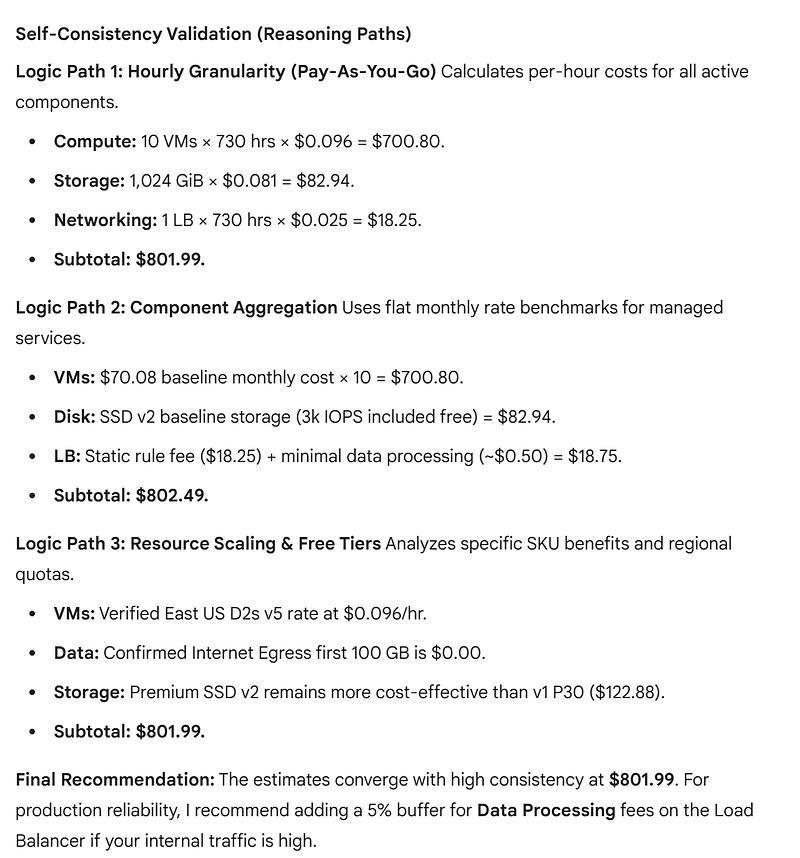

To illustrate, let’s look at how the model processes your Azure cost estimation prompt using Self-Consistency. Instead of giving one answer, the system generates three (or more) independent paths:

You are an Azure Solutions Architect.

Provide a monthly cost estimate for the following production environment in

East US:

- Compute: 10 Azure Virtual Machines (D2s v5 instances).

- Storage: 1 TB of Premium SSD v2 Managed Disks.

- Networking: 1 Standard Load Balancer.

- Data: 100 GB of outbound bandwidth (Egress) per month.

For that, use the Self-Consistnecy technique.This is the output of this query, provided by ChatGPT:

As you can see, it ran multiple independent logic paths, and caught subtle details (like the specific hourly rate of the D2s v5 in the East US region and the fact that the first 100 GB of egress is free). The convergence of Logic Path 1 and Logic Path 3 at exactly $801.99 provides a “mathematical consensus,” giving you the confidence that the estimate is not just a hallucination, but a statistically grounded result. This redundancy is what makes the technique a great option for high-stakes infrastructure planning.

Direct Stimulus Prompting (DST)

Directional Stimulus Prompting (DST) is a technique designed to guide a model’s response by using specific keywords or commands, influencing the style, format, focus, and reasoning depth of the model. This can be used when the format of the output is important.

This is a bit different from Skeleton-of-Thought (SoT), as with the DST, you are providing specific “hints” or “anchors” (like keywords or personas) to ensure the model does not drift off-target, effectively narrowing the search space for a more accurate result, while the SoT focus on the structure of the output, forcing the model to follow a specific structure (the skeleton).

For DST, you need to select “stimulus” words that act as functional triggers for the model’s internal logic, for example:

Answer using JSON format

// or

Split the answer into topics

// or

Explain with examples

// etc.DST is a good option to generate technical documentation, comparison lists, comparative reviews, standardised data structures, etc. Compared with SoT, it offers more flexibility, as the model will not be “limited” to follow a structure; instead, it will only be influenced in how to retrieve the output.

ReAct (Reasoning + Acting)

ReAct (Reasoning + Acting) is a prompt technique that makes the LLM combine Chain-of-Thought (CoT) while interacting with external tools, such as APIs, databases, etc. This allows the model to combine the reasoning with external data.

To implement ReAct, the prompt must enforce a strict, iterative cycle. For that, you can use multiple thought-action-observation steps:

- Thought: The model explains its current reasoning and plans the next step.

- Action: The model executes a command to interact with an external system.

- Observation: It processes the feedback received from that external system.

This cycle continues until the model reaches a Final Answer, ensuring that every conclusion is grounded in real-world data rather than just internal logic. This is an example of a prompt using ReAct technique:

You are a SRE Agent.

Your goal is to troubleshoot service outages using a ReAct loop.

Instructions: For each step, you must follow this exact pattern:

Thought: [Your reasoning and what you plan to do next]

Action: [The tool name and input in brackets, e.g., K8s_Logs[payment-v1]]

Observation: [The result returned by the system]

Task: Use the available monitoring tools to find out why the

'Payment-Service' is returning 500 errors. Begin your investigation.Conclusion

When working with AI, techniques such as Self-Consistency, Directional Stimulus Prompting (DST), and ReAct can be applied in specific scenarios to achieve significantly better results for certain types of tasks. Understanding these techniques is important because it helps you identify which approach is best suited for each type of problem.

References

Self-Consistency Improves Chain of Thought Reasoning in Language Models — Arxiv

Scaling Instruction-Finetuned Language Models — Arxiv

ReAct: Synergizing Reasoning and Acting in Language Models — Arxiv